This case began, like many others, with cold contact with the client. In one of the Facebook groups, our employee saw a message like “Who can help me with the data scraper“? Our employee answered and the conversation began. Since then 6 months have passed, 4 of which we actively worked. During this time we have helped to increase the revenue of the customer’s online store by 74%. And now we are happy to share this information with you.

The client with whom we had a conversation then is a successful entrepreneur from the United States, who owns an online store of furniture and fittings. In her online store, you can find inexpensive furniture, interior elements, paintings, carpets, and much more. In general, the online store is focused on the average price category and good quality goods.

Like every other entrepreneur who owns an online store, our customer was looking for ways to increase the profit of his store. “How to increase revenue without large investments in advertising and marketing” – she thought. Eventually, after studying several articles and listening to some suggestions, she decided that the best way to increase revenue is to fill the store with new products and attract traffic from search engines. Around that time, we met with her.

Problem statement

So, we have an online store and have its owner who wants more profit. As we know, the easiest way to increase sales is to expand the range of goods sold. Here lies the problem, which solves our application.

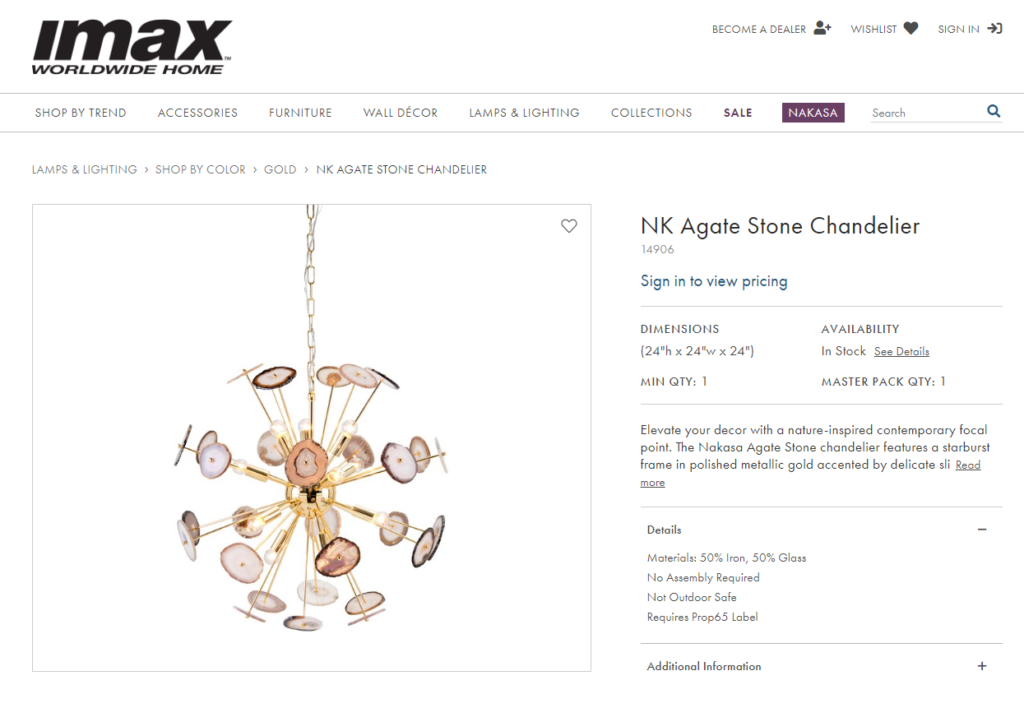

The entrepreneur, who approached us, had about 12 suppliers. Each of these suppliers offered excellent products and surprisingly fast delivery. Moreover, almost all of the suppliers gave a long installment payment and a great price for the wholesale purchase. Our colleague from the USA was satisfied with absolutely everything except one small detail.

Almost NO ONE of their 12 suppliers could provide a price list for the sold goods with pictures of these goods. 🤦♂️

It sounded funny, but in fact it was a real and deep problem. Suppliers were so big and clumsy companies that it took several months of approval by the management to agree the price list with the pictures and send it. As one could understand, during these months, the range of goods was changing very strongly.

As a result, entrepreneurs who worked with these suppliers had to manually copy goods from their sites. Most managers of these wholesalers said: “It would be easier for you to copy goods from our site manually“.

CTRL+C, CTRL+V, repeat 🤯

So, how did our client solve this problem before contacting us? Oh yes, you got it right. She tried to copy products manually from the site, and did it within a few days. Then she assigned this work to his employees, who also copied the goods on the site. After a while, she realized that this was not a very effective waste of time and started looking for another solution.

Judge for yourself. To copy one product from one site to another manually takes about 3 minutes. You can try to do it yourself and measure the approximate time. Thus, in an hour you can process 20 products. For one working day of 8 hours – no more than 160 products. And this is despite the fact that you will not make any mistakes anywhere and everything will be perfect.

The real speed of copying by hand, by our estimates, is no more than 70 goods per day for a professional. As a result, you can copy manually no more than 1500 goods per month. Calculate for yourself: 21 working days multiplied by 70 products per day. 1500 goods per month is not that much, isn’t it? Many suppliers offer from 10 thousand items in their price list.

What to do in such a situation? Well, how could you guess from the name of our project – to automate the copying of product cards from the supplier’s site to the customer’s site! That’s what our client came to, and that’s why she decided to ask the group for advice on Facebook.

Let’s automate goods scraping!

After some negotiations with suppliers, the client was convinced that they are not against copying goods from their sites to the client’s site. In turn, at the same time, we have carefully studied the suppliers’ websites and made sure that our application is suitable for extracting the data from them. This is where our work with the client began.

The formal task was to extract the product cards from the suppliers’ websites. Then, these cards had to be exported in .xlsx format and a folder with product pictures. Our customer already had a module to import data from such format (xlsx + images).

Our program copes well with such a task. Data Excavator is an application for automatic extraction (scraping) of any data from websites. With it you can automatically extract product cards from suppliers and e-commerce websites, contact information from common websites, any data from social networks, and much more.

First of all, we should note that the client was not a big programming specialist, and she initially decided to buy not only a license to use our application, but also a technical support package. We willingly agreed to do such a job, as we do every other time when the profile of the task at hand fits the profile of our application well. Next, the client gave us access to these sites and told us what kind of information to automatically copy from vendor sites.

It took us about a week to plan and do more in-depth testing of the application on these sites. Then, we started to extract the data directly.

Scraping process

So, we successfully tested our application on all sites and started extracting data. Note that some of the sites were not as easy as others, but we handled them. In about a week we had the settings ready for all sites.

The settings included authorization on the site under the client’s login and password, extraction of links to all products from all categories, and further extraction of information about each product. Such information included product name, price, description, parameters, manufacturer and other data. And of course, this information included pictures of the goods.

Sometimes we came across complex sites. Typical problems in scraping – sites with endless scroll down, sites that do not have a href tag at all, sites with pictures presented in non-standard forms and similar. Nevertheless, we have coped with all the difficulties on our way.

Over the next four months, as the client was ready, we extracted data from each of these sites. We checked the data and made sure that it was completely suitable and ready to be imported to the client’s site. Then, the client uploaded the data to his site.

Our customer uses Shopify as the engine for his store. In general, there were no particular problems with the import. At one time, we faced restrictions on uploading a large data file, but we managed to successfully overcome the problem with a special module from a third-party vendor.

Hey, sales seem to be going up!

So, we were extracting data from supplier sites. We processed them one by one and sent the results to the client. Gradually, the client’s site was updated with new product cards. +5 thousand products, + 2 thousand products, +7 thousand products, and so on. It is not surprising that search engines noticed such rapid growth and began to actively index new data.

After about 1.5 months, the client began to notice an increase in natural attendance of the site. And to our joy, the traffic was actively going to the pages with new products.

After 2.5 months web traffic growth was about 20%, and after 6 months the figure came close to 68% 🚀

At the same time, the customer noticed that most of the traffic has not yet visited his site – these were new customers from new product categories!

At the same time, the revenue of the store began to grow. Here worked a double effect. First, the store began to get new customers for new products that were not on the site before. These were carpets, some fittings, candlesticks and handmade paintings. Secondly, existing customers also increased their average check, as they saw new products that had not been on the site before.

Revenue has steadily increased from month to month, and at the end of 6 months, its growth was a record 74%!

The customer was literally happy. By the end of this short period she had some pleasant problems – we had to urgently look for new managers and several drivers for delivery.

Job results

As a result, we spent one active month for training, and about 4 months continued the work. We could have done it much faster, in a few weeks, but the customer did not want to rush, and carefully selected suppliers and product categories.

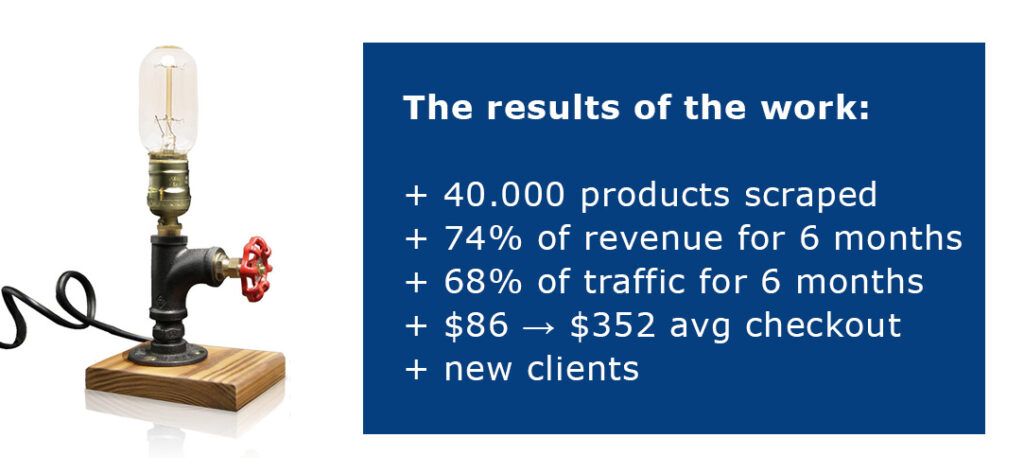

During the whole period of work, we scraped and uploaded about 40 thousand items to the client’s website. Each of these goods had quality data – description, parameters, high-resolution photos. Each product was placed in the right category, which gave the greatest conversion from visiting and offered the sale of related products.

As a result, our client received a +68% increase in web traffic, a +74% increase in revenue, and an increase of $86 → $352 average checkout for 6 months.

She had to increase his staff and seriously work on the speed of service. At the moment, the growth of traffic and revenue continues. We also continue to work together and fill the customer website with new product cards.

Well, we are very glad that our scraper allowed us to quickly and easily increase sales, saving hundreds of hours of human work on boring copying product cards.

But it’s not all that simple 🤔

In this case we told an interesting story, which is a good example of our application. But there are also some nuances that cannot be overlooked. Without these nuances, our work would not have had this effect, and perhaps the growth would have been more modest. And here’s what’s important to notice:

- The customer already had a successful online store. The processes of selling, working with the customers, receiving payments and delivery were smooth and worked well. All that was missing was the new assortment.

- The customer was selling only quality goods from proven suppliers. She knew her client and did not try to offer them nonsense, consistently advocating good quality.

- The customer agreed with suppliers on the possibility of parsing goods. Suppliers did not object to this, as a result, there were no copyright violations.

- The client carefully analyzed the data that we automatically extracted and checked for consistency, and its employees put the information about the goods into the right categories.

- The client did not rush us, but on the contrary, advocated for speed limitation and repeatedly checked the quality of the obtained results.

In general, our client was serious and wanted to get good results. We are very glad that our joint work was successful and hope that our cooperation will continue to develop in a profitable way.

How can we help other clients with similar tasks?

Well, our application allows you to quickly extract a large number of product cards from virtually any site. These can be either large companies like Amazon, AliExpress, BestBuy, or small wholesalers.

Our technical support is also at your service. The experts are ready to immerse themselves in your problem and find the best and most inexpensive way to solve it.

As for the application itself – inside it there are many ready-made templates for popular sites. In the case of such sites as Amazon or AliExpress, all you need to do is click on the “Use Template” button and then “Run Scraper”.

And last but not least, a few links. You can download the application here. A demo key for one month is available here. You can send us a message here.